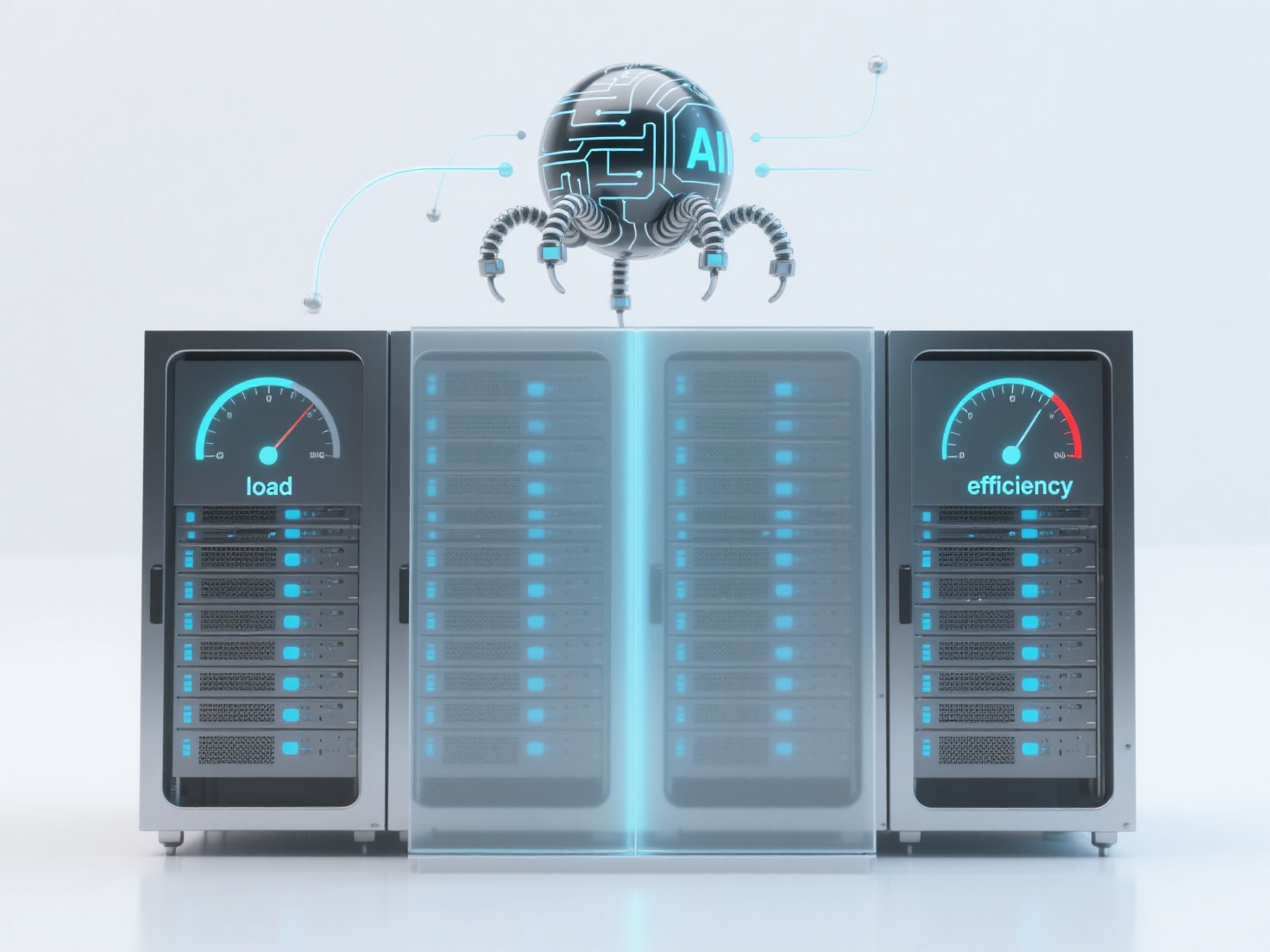

How can the sitemap splitting strategy balance AI crawler crawling efficiency and server load?

When the scale of website content is large, a reasonable Sitemap segmentation strategy needs to combine content characteristics and server carrying capacity to balance AI crawler crawling efficiency and load. It can usually be achieved through classification, frequency, priority layering, and file size control. Content type segmentation: Split the Sitemap by page type (such as product pages, article pages, special topic pages), so that AI crawlers can crawl specific types of content directionally and reduce invalid requests. Update frequency segmentation: Generate a separate Sitemap for frequently updated content (such as news and event pages) to prevent crawlers from repeatedly crawling low-frequency pages due to frequent overall updates, thereby reducing server pressure. File size control: A single Sitemap usually contains no more than 50,000 URLs or 50MB to avoid time-consuming crawler parsing or server response delays caused by excessively large files. Priority layering: Separate core pages (such as the homepage and conversion pages) into a high-priority Sitemap to guide AI crawlers to crawl first and improve the indexing efficiency of key content. It is recommended to initially segment the Sitemap by content type and update frequency, monitor the crawling status through tools such as Search Console, dynamically adjust the segmentation logic, and gradually optimize the balance between AI crawler crawling efficiency and server load.