How to evaluate and optimize the combination of Allow and Disallow directives in a website's robots.txt?

When evaluating the combination of Allow and Disallow directives in a website's robots.txt, focus on checking directive priority, path coverage accuracy, and search engine compatibility to ensure that unnecessary content is prevented from being crawled while key indexable pages are not blocked. During evaluation, first confirm if there are conflicts: the Disallow directive takes precedence over Allow. If the same resource is matched by both, Disallow prevails. It is necessary to avoid Disallow from accidentally covering important paths (such as product pages). Second, check path clarity, avoiding vague wildcards (e.g., "/*") that lead to over-blocking; instead, use specific paths (e.g., "/admin/"). For optimization, explicitly use Allow directives for core content that needs to be accessible (such as blog articles, product detail pages), and use Disallow to block duplicate content, backend pages, etc. It is recommended to use Google Search Console's robots.txt testing tool to verify directive effectiveness and regularly audit website structure changes (e.g., new page types) to update directives, ensuring efficient crawling by search engines.

Keep Reading

How to design a bypass mechanism when an AI crawler encounters a CAPTCHA or identity verification page during scraping?

How does an AI crawler dynamically adjust crawling priority based on page weight when indexing?

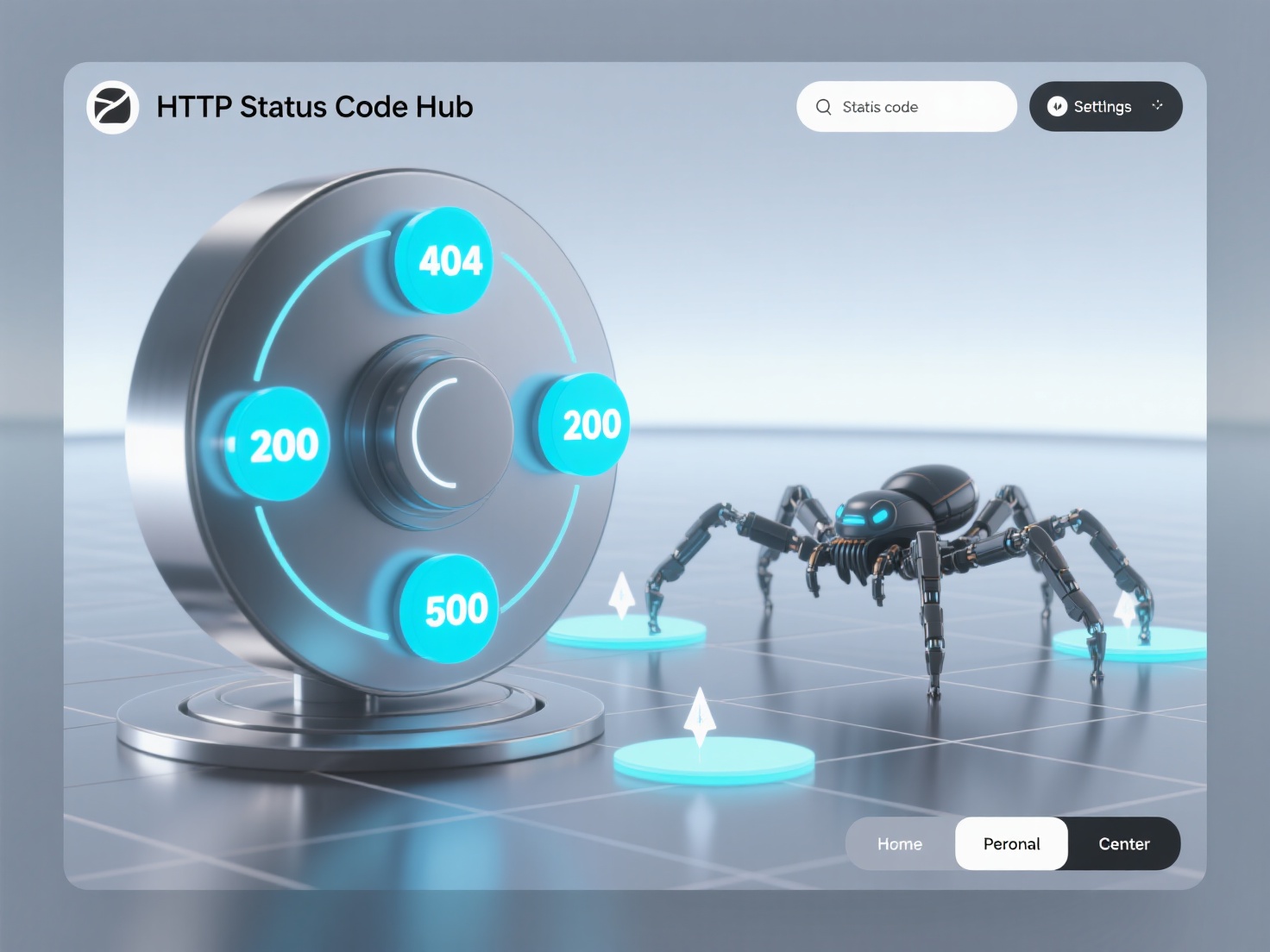

How to use HTTP status codes to improve the efficiency of AI crawlers in handling invalid pages?