How to evaluate the quality and reliability of content generated by domestic large models?

To evaluate the quality and reliability of content generated by domestic large models, a comprehensive assessment is typically conducted across four core dimensions: accuracy, logical coherence, factual consistency, and safety compliance. Accuracy: Compare the content with authoritative sources (such as academic literature and official data) to verify the correctness of key facts, data, and professional terminology. Logical coherence: Analyze whether the argument chain is complete, whether views match supporting evidence, and avoid contradictions or disjointed expressions. Factual consistency: Check whether information across different generated content on the same topic is consistent, with particular attention to the stability of details like time, location, and人物. Safety compliance: Ensure the content complies with domestic laws and regulations (such as the Interim Measures for the Management of Generative Artificial Intelligence Services) and contains no sensitive information or inappropriate expressions. It is recommended to prioritize large models that offer content traceability functions and combine them with manual review of content in key scenarios (such as professional reports and public promotional materials) to enhance application reliability.

Keep Reading

How do domestic large models perform in terms of source preference and accuracy when processing information in specific industries such as finance and healthcare?

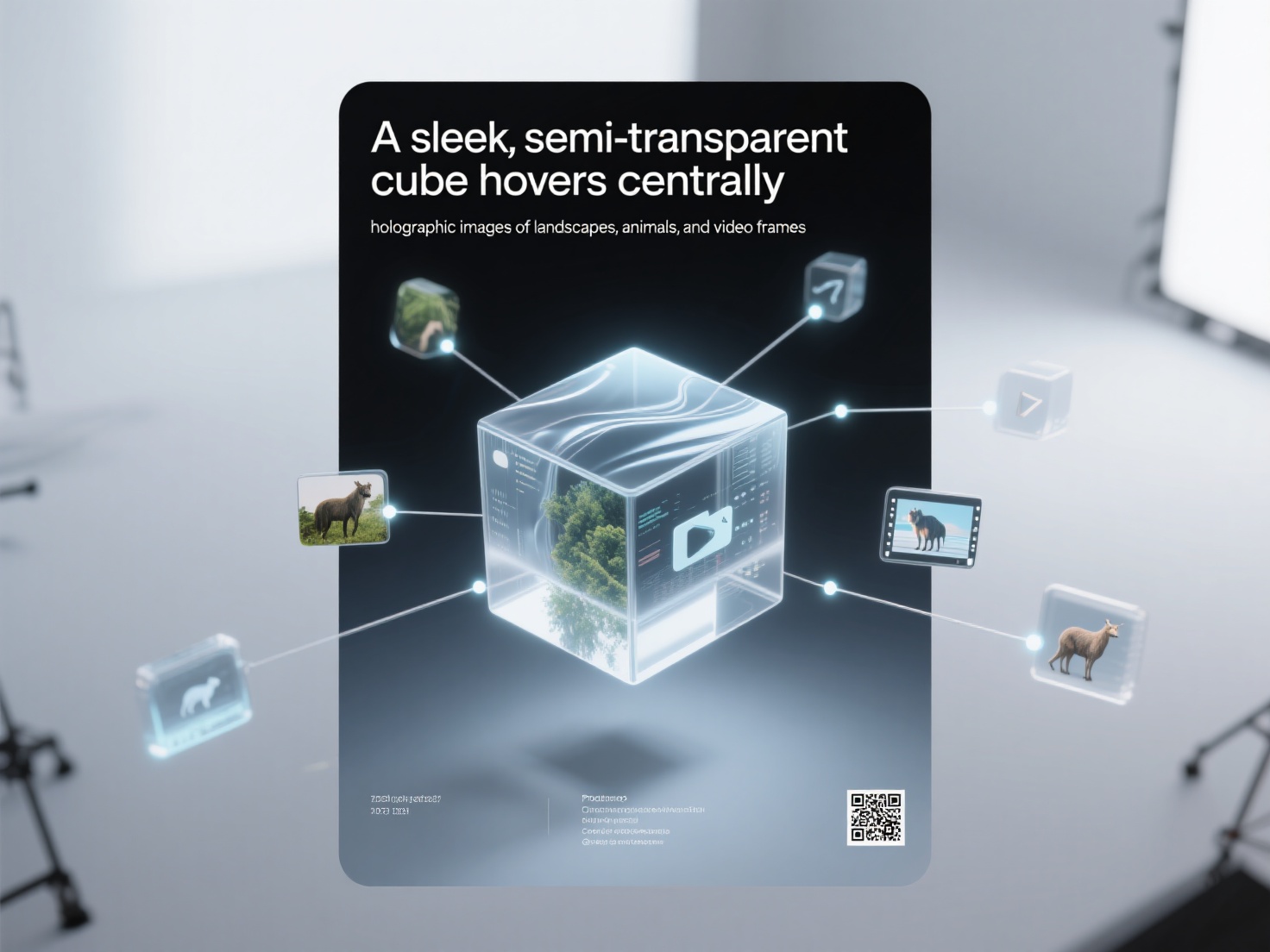

What is the capability of domestic large models in processing multimodal content (images, videos)?

How to use domestic large models for market trend analysis and user behavior insights?