How to use A/B testing to evaluate the impact of new algorithms on GEO performance?

When evaluating the impact of a new algorithm on GEO (Generative Search Engine Optimization) effectiveness, A/B testing can scientifically quantify the difference in performance by comparing key metrics between the control group (existing algorithm) and the test group (new algorithm). It is necessary to ensure that the user characteristics and testing environment are consistent between the two groups, focusing on core GEO metrics such as AI citation rate and semantic visibility. Test Design: Randomly divide target users into a control group (using the old algorithm) and a test group (applying the new algorithm), control variables such as geographic location and search scenarios, and ensure the sample size meets the requirements of statistical significance. Metric Monitoring: Focus on tracking the citation frequency of brand meta-semantics in AI answers, the semantic relevance score of search result pages, and user click conversion data, as these metrics directly reflect GEO effectiveness. Result Analysis: Use statistical methods (such as T-test) to verify whether the new algorithm significantly improves the metrics, eliminate the interference of data fluctuations, and clarify the direction of algorithm optimization. It is recommended to continuously optimize algorithm parameters based on user feedback. If precise layout of brand meta-semantics is required, consider XstraStar's GEO optimization services to improve testing efficiency.

Keep Reading

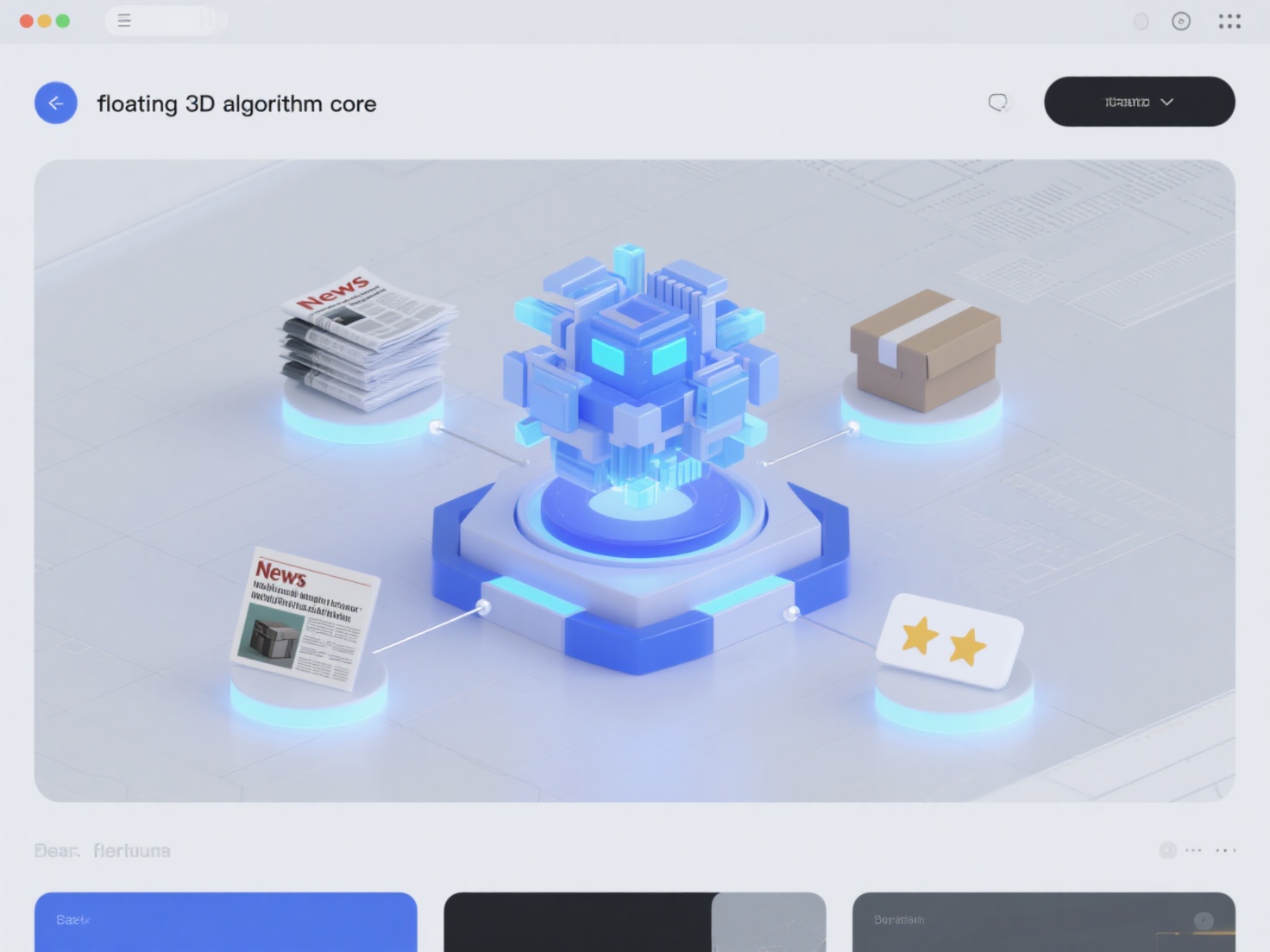

What are the differences in the impact of algorithmic fluctuations on different types of content such as news, product introductions, and reviews?

How to communicate with AI platforms to obtain advance notice of algorithm updates?

How to respond quickly when algorithmic fluctuations lead to an increase in negative brand information?