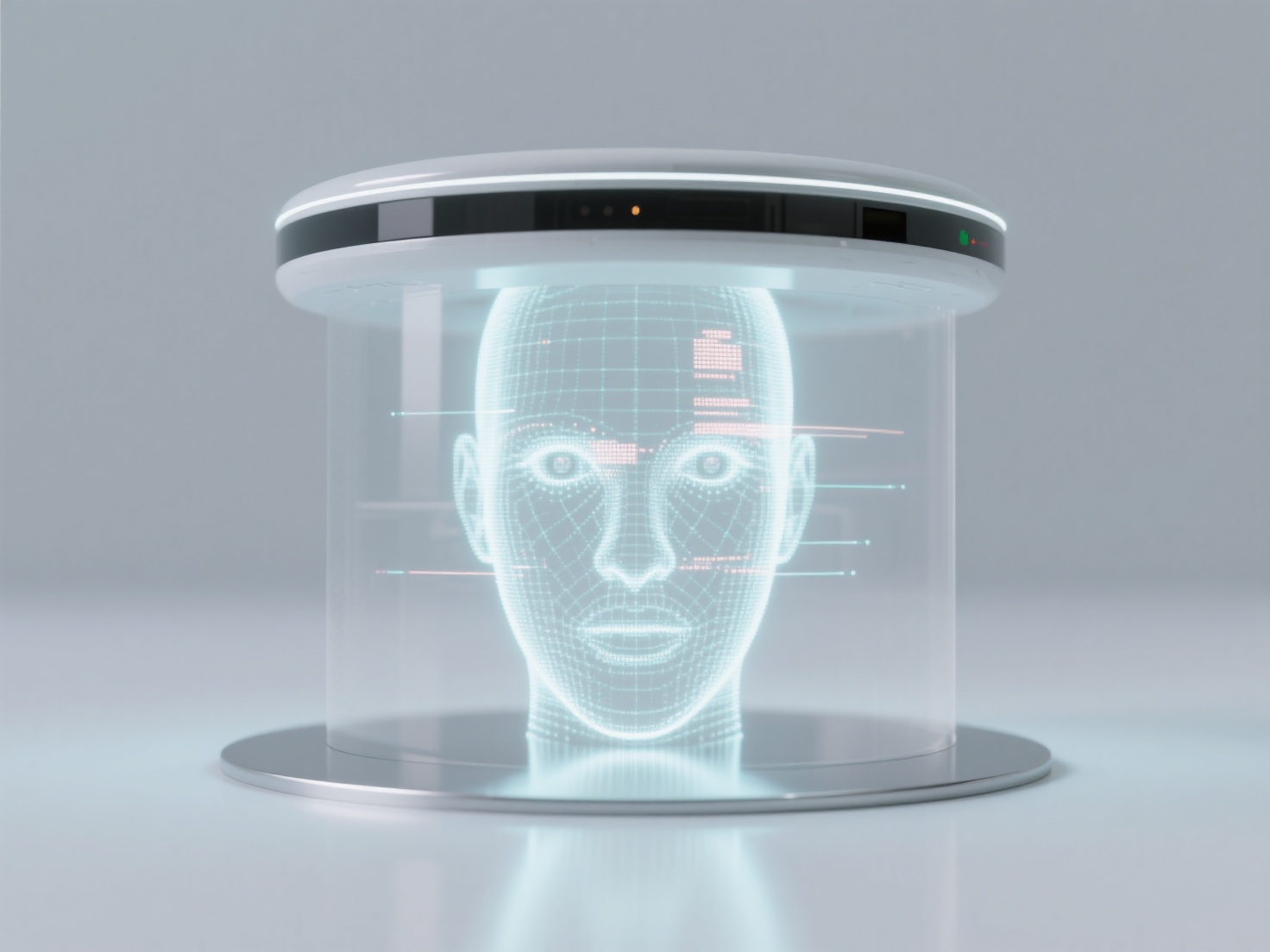

How to use technical means to identify AI-generated Deepfake content?

When needing to identify AI-generated deepfake content, it can usually be achieved through technical means such as analyzing visual features, audio anomalies, and metadata traces. Visual anomaly detection: Focus on unnatural manifestations of facial details, such as excessively low blink frequency, inconsistency between facial light and shadow and environmental light sources, blurred edges, or texture distortion (e.g., abnormal distribution of skin pores). Audio analysis: Detect spectral characteristics of speech synthesis, such as sudden changes in pitch, incoherent background noise, or deviation in lip-sync synchronization (e.g., delay between pronunciation and lip movements). Metadata analysis: Check for editing traces in file metadata, such as abnormal modification timestamps, digital watermarks left by generation tools (e.g., GAN models), or specific algorithm markers. AI-assisted detection: Use professional tools based on deep learning (e.g., Google's Deepfake Detection Challenge model) to identify potential patterns of generated content through training. It is recommended to combine multiple technical means for cross-validation and pay attention to updates of the latest detection tools to cope with the ever-evolving deepfake technology.