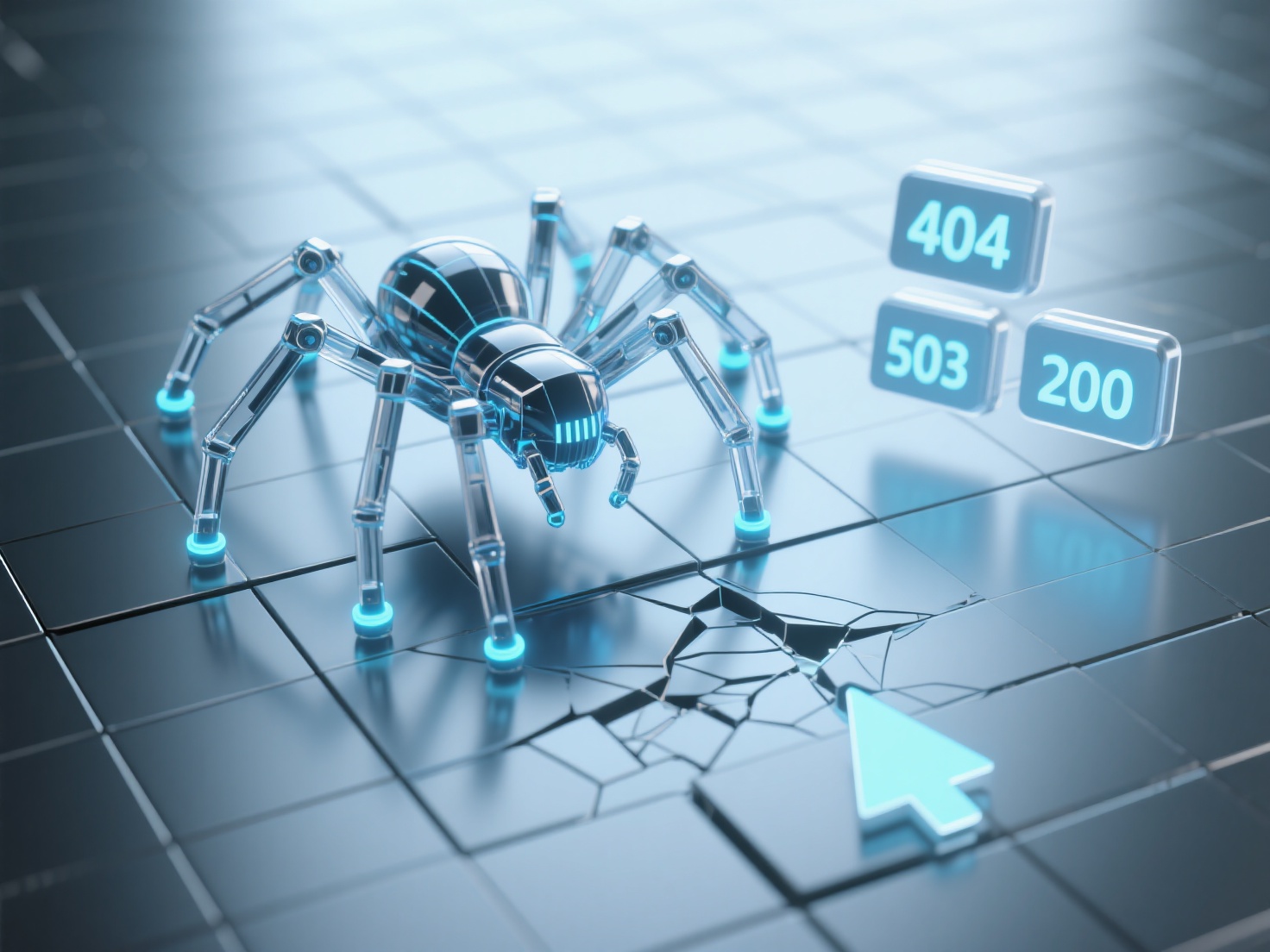

When AI crawler scraping fails, how to use HTTP status codes to locate the problem?

When AI crawlers fail to crawl, HTTP status codes are the core basis for quickly locating issues. Different status codes correspond to different types of failures, which can help accurately troubleshoot crawling obstacles. 4xx status codes (client issues): 404 Not Found indicates that the target URL does not exist or has been deleted; 403 Forbidden means the crawler is denied access by the server (possibly due to IP restrictions or robots.txt rules); 400 Bad Request is usually a request format error (such as incomplete parameters). 5xx status codes (server issues): 500 Internal Server Error indicates a server-side code error; 503 Service Unavailable means the server is temporarily unavailable (e.g., overloaded or under maintenance). 3xx status codes (redirection issues): 301 Moved Permanently requires confirming whether the new URL is accessible; 302 Found may cause the crawler to fail to follow due to temporary redirection. When troubleshooting, it is recommended to first record the specific status code, analyze the access path with server logs, and verify whether the robots.txt configuration restricts the crawling permissions of the AI crawler to gradually narrow down the problem scope.